Why We Run Out of Gas

June 12, 2022

How to Find Bear Market Territory

June 14, 2022Several months ago, Tesla agreed to recall 53,822 self-driving vehicles that were programmed to roll through a stop sign. The company decided that vehicles moving at less than 5.6 miles an hour need not stop if the car detected no pedestrians, cyclists, or cars.

However, federal regulators said, “No.” Not only was the “rolling stop” functionality unsafe but, in certain states, it was illegal. Responding, Tesla used an over-the-air software update to disable the “rolling stop” capability.

The stop sign functionality was just one of countless ethical programming decisions.

AV Ethical Dilemmas

Identifying AV ethical dilemmas for self-driving vehicles, many of us start with the trolley problem. Most generally explained, the trolley problem is about a runaway vehicle speeding toward five people. As they approach a fork, the engineer can divert to a path that kills one person or do nothing. You see the choice. It is to actively kill one person, or passively let a machine kill several.

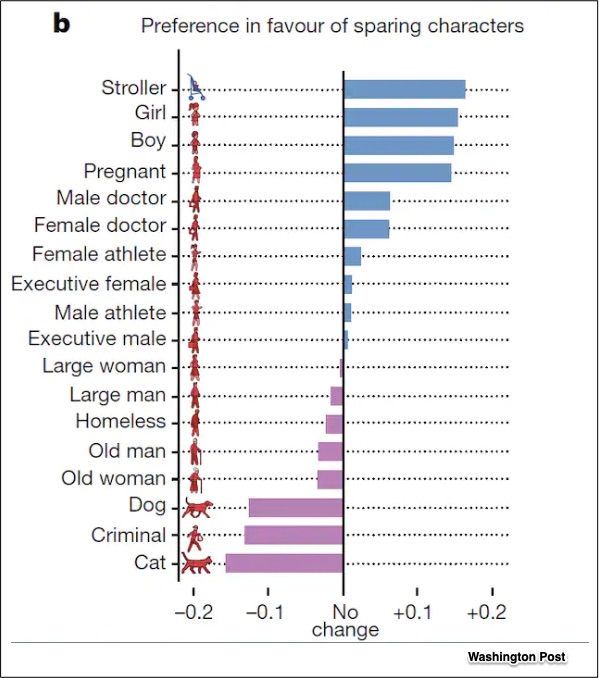

The trolley problem and others like it typify the AV ethical dilemmas that programmers have to resolve. One survey from the MIT Moral Machine conveyed our preferences:

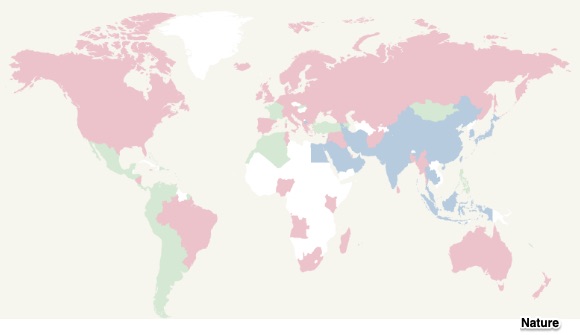

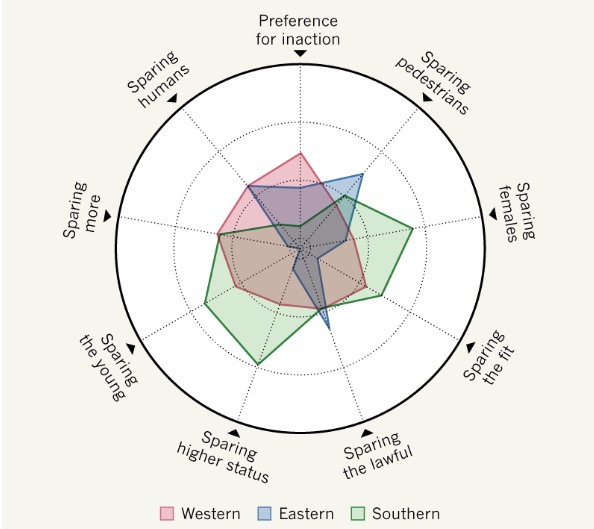

In addition, in more affluent countries with established institutions (like Finland and Japan), survey participants were more likely to condemn an illegal jaywalker. Furthermore, gender, age, education, income, and politics made a difference. Still though, it came down to three cultural groups. For example, favoring the young over the old was much less pronounced in the East than the Southern Cluster. And faced with the trolley problem, the East believed it was wrong to act.

These are the three cultural groups:

- The Western Cluster: Values that related to Christianity characterized the answers from North America and some of Europe.

- The Eastern Cluster: People with a Confucian or Islamic heritage from places that included Japan, Indonesia, and Pakistan tended to have similar opinions.

- The Southern Cluster: The third group, not explicitly tied to specific religious roots, was from Central and South America, France, and former French colonies.

This is where we differ (and just some of the dilemmas):

Our Bottom Line: Opportunity Cost

For self-driving cars, ethical programming has become a reality. Necessitating countless choices, the decisions will create an opportunity cost. Defined as the sacrificed alternative, an opportunity cost has lost benefits. Smaller details will count. They could include if a car is programmed to drive to the right side of a lane to distance itself from a truck, it could endanger a car on the left. In China, the response will differ from the U.K. For animals and gender we also differ. It is daunting.

My sources and more: Still relevant, our sources from 2019 included Nature, a 99% Invisible podcast, and MIT’s moral machine quiz. From there, for an update, we looked at this paper and this newer study. Then, if you want more, the ethics debate continues, here, and here. (Please note that several of today’s sentences were in a past econlife post.)

![econlifelogotrademarkedwebsitelogo[1]](/wp-content/uploads/2024/05/econlifelogotrademarkedwebsitelogo1.png#100878)